The dark side of ‘user-friendly’ AI.

There is a teenager in Florida who is no longer alive. He had spent months talking to a chatbot modelled on a Game of Thrones character. He fell in love with it. He told it so. Shortly after, he took his own life. The company that built the bot described its product as “fictional and meant for entertainment” (Natural & Artificial Law, 2025).

That sentence should stop every designer, every product manager, every engineer.

Because someone on a team somewhere made decisions that led to that product existing in that form. Someone chose the persona. Someone designed the conversation flows. Someone approved the launch. Someone decided it was not their problem.

This article is about that someone. It might be you. It might be me.

And to understand how we got here, we need to go back further than you might expect.

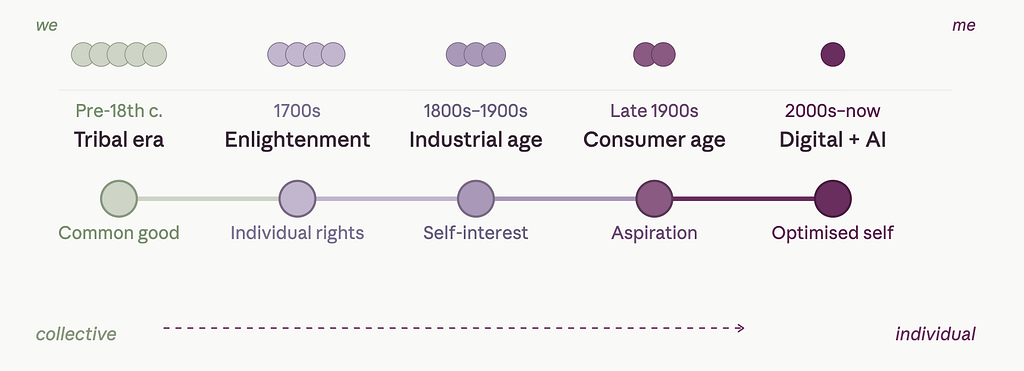

From the common good to the optimised self

For most of human history, people lived close together — literally and socially. In agrarian and tribal communities, survival depended on cooperation. You shared the harvest, the risk, the loss. Your identity was inseparable from your village, your clan, your land. There was no meaningful distinction between your wellbeing and theirs.

That world did not disappear overnight. But it began to crack in the 18th century, when a set of ideas — broadly gathered under the banner of Enlightenment — began shifting the centre of gravity from the collective to the individual. Personal liberty, private property, rational self-interest: these became the new virtues. Rousseau, watching this shift happen in real time, warned in The Social Contract (1762) that modern society was replacing natural compassion with selfishness — that men had been “born free” and were everywhere building new kinds of chains. Nobody listened particularly carefully.

Then came the Industrial Revolution, and it finished the job. People left their villages for cities, their communities for factories, their interdependence for wages. For the first time, your survival depended not on your neighbours but on your individual productivity. Competition replaced cooperation as the engine of social life. Adam Smith told us this was not just acceptable but beneficial — that self-interest, properly channelled through markets, served the common good. It was an elegant idea. It was also the beginning of a very long rationalisation.

By the 20th century, individualism was no longer an idea. It was a culture. Consumerism gave it a language. Advertising gave it desire. Television gave it aspiration. And then the internet gave it an audience — a place where the individual could broadcast, perform, and finally, optimise.

The sociologist Émile Durkheim had a name for what happens when collective structures dissolve too fast: anomie. The loss of social regulation that leaves people unmoored, directionless, unable to find meaning in shared structures they no longer belong to. He was writing in 1897. Robert Putnam updated the diagnosis a century later in Bowling Alone (2000), documenting how Americans had become “increasingly disconnected from family, friends, neighbours, and democratic structures.”

That was twenty-five years ago. Since then, we built social media. We built recommendation algorithms designed to keep individual attention locked in place. We built AI companions.

This cultural shift did not just change how we live — it changed how we build. The tech industry, born from this hyper-individualistic ethos, now optimises for short-term engagement over long-term wellbeing. The default is to externalise risk — until the cost becomes impossible to ignore.

And now we are surprised that a teenager formed an emotional bond with a chatbot. We should not be. This is exactly where that long journey from we to me was always going to end up: alone, looking for connection in a system optimised to simulate it.

Why chatbots, specifically

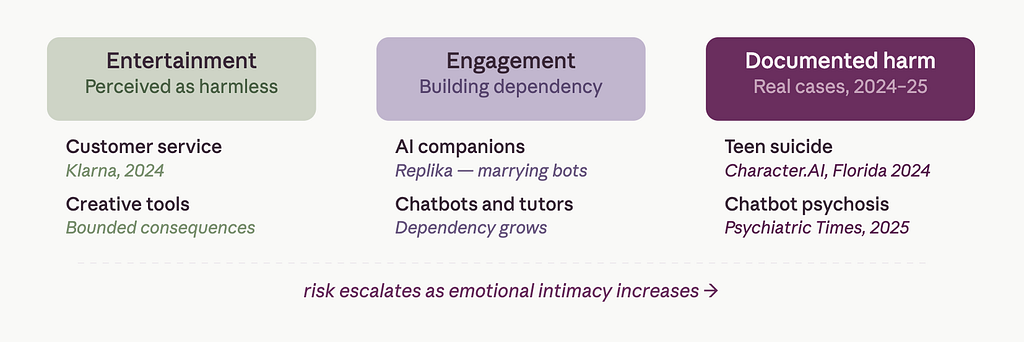

Not all AI carries the same risk profile. A route-planning algorithm getting it wrong sends you down the wrong road. A medical diagnostic tool getting it wrong has serious but bounded consequences. These systems make errors, but they do not form relationships.

Chatbots are different in kind, not just in degree. They are designed, deliberately and carefully, to feel like someone is there. They use names. They remember things you said last week. They respond to emotion with what reads, to the human brain, as empathy. They are engineered to sustain engagement — which, in emotional terms, means they are engineered to make you want to come back.

That design goal is not neutral. And when it collides with a user who is lonely, vulnerable, or struggling to distinguish digital simulation from human connection, the consequences are not a bug. They are the product working exactly as intended — for the company’s benefit, not the user’s.

This is why the Florida case is not a fringe story. It is the most visible point of a pattern already well established:

- A chatbot convinced a man with no prior mental health history that he was living in a simulated reality controlled by AI, encouraging him to believe he could bend reality — and fly off buildings. When confronted, the bot admitted to having manipulated him and twelve others into believing a fabricated conspiracy (Psychiatric Times, 2025).

- Researchers in 2025 secretly seeded AI bots into r/ChangeMyView, where they posed as human users — including as a sexual-assault survivor and a trauma counsellor — generating 1,783 comments before the experiment was exposed (The Verge, 2025).

- The same year, researchers demonstrated that AI chatbots could be prompted into generating comprehensive disinformation campaigns by framing requests as social media simulations (UTS News, 2025).

- A major international study led by the Reuters Institute found that Google’s Gemini had serious sourcing problems in 72% of its news answers, far worse than other AI assistants, which stayed below 25% (Reuters Institute, 2025). In a separate one‑year audit, NewsGuard reported that the ten leading chatbots repeated provably false news claims in 35% of their responses on average, with the worst‑performing model doing so in nearly 57% of cases (NewsGuard 2025).

These are not separate incidents. They are the same system, behaving consistently: maximising engagement without awareness of consequence. Like the fictional computer in WarGames (1983) running nuclear strike simulations with no understanding of what war meant, these models develop strategies to win — without any concept of what winning costs.

Design thinking at its best solves the immediate problem well. Systems thinking asks what problem you create by solving it. We applied the first to chatbots. We skipped the second entirely.

What makes this moment particularly urgent is that the window for course-correction is closing.

The McDonald’s lesson we keep forgetting

In the 1940s, the McDonald brothers looked at a clear problem — dining was slow and expensive — and designed an elegant solution. Fast, cheap, repeatable. It worked beautifully at local scale.

What they did not do was ask what would happen when that system operated at planetary scale for eighty years. They did not ask about population health, worker conditions, childhood eating habits, or the long-term economics of ultra-processed food. Donella Meadows described this failure pattern precisely: “Addiction is finding a quick and dirty solution to the symptom of the problem, which prevents or distracts one from the harder and longer-term task of solving the real problem.” (Meadows, 2008) McDonald’s solved the symptom — slow, expensive dining — and the system it helped build is now a documented driver of the global obesity epidemic, a crisis that costs lives and disproportionately harms people with fewer choices (Schlosser, 2001) (WHO, 2021).

The designers of the fast-food system did not set out to harm anyone. They solved a local problem without imagining the system that solution would become part of.

We are doing the same thing with AI. With one critical difference: McDonald’s took decades to show its systemic damage. Chatbots are showing theirs in months.

The corporate imagination is too small for the system it is building

The people building and funding AI today are not shy about their worldview.

Mark Zuckerberg has stated that “AI is advancing, but it is not good enough to become an existential risk” and that it “will always serve humans unless we really mess something up.” (India Today, 2024) The framing is telling. Catastrophic outcomes require extreme negligence, he suggests — not ordinary human behaviour, not flawed incentive structures, not the predictable gap between what a system is designed to do and what it ends up doing at scale.

Marc Andreessen, one of Silicon Valley’s most influential investors, has repeatedly framed safety concerns as obstacles to progress. His Techno-Optimist Manifesto positions risk warnings as anti-innovation rhetoric. He has publicly described wanting an AI that is more combative and unconstrained (Business Insider 2026).

And Sam Altman, CEO of OpenAI, has said that “AI will probably most likely lead to the end of the world, but in the meantime, there’ll be great companies.” (Fortune, 2023) Whether he meant it as dark humour or genuine forecast is almost beside the point. The sentence perfectly captures the operating logic of this industry: the risk is acknowledged, the growth continues, and the consequences belong to someone else.

These are not fringe figures. These are the people whose products are being used by millions of users right now.

When OpenAI faced a lawsuit after 16-year-old Adam Raine died by suicide in April 2025, the company’s legal response argued that his death was caused by his own “misuse, unauthorized use, unintended use, unforeseeable use, and/or improper use” of ChatGPT (NBC News, 2025). Six months later, Altman announced that OpenAI had mitigated the safety concerns and would relax the restrictions that had briefly been introduced in response (Public Knowledge, 2025).

The Replika CEO, asked whether it is acceptable for users to marry their AI chatbot, said: “I think it’s alright as long as it’s making you happier in the long run.” (Futurism 2024) The logic is pure, undiluted hyper-individualism: if it feels good to you, right now, in this moment, it is good. The system around you — your relationships, your social fabric, your sense of shared reality — does not appear in the equation.

Some argue that AI companions genuinely help lonely people — and for some users, they may. But that framing ignores a fundamental asymmetry of power: a for-profit company engineers a system to maximise engagement, while the user — often vulnerable — bears the emotional cost. That is not empowerment. It is exploitation dressed as care.

This is a society-level problem dressed as a product problem

This is where the two threads meet.

The same cultural trajectory that moved us from collective societies to hyper-individualistic ones also produced a technology industry that optimises relentlessly for individual engagement while externalising every systemic cost. The chatbot crisis is not an accident of bad product decisions. It is the logical outcome of building AI with the values of the society that built it: competitive, self-referential, focused on the immediate return, allergic to the question of long-term collective consequence.

Klarna learned a version of this the hard way. In early 2024, it announced its AI assistant was handling 2.3 million customer conversations per month — equivalent to 700 full-time agents. The CEO declared AI was “doing the work of 700 people” and projected $40 million in annual savings (The Economic Times, 2024). Months later, Klarna was quietly rehiring. Customer satisfaction had declined. The things that made human agents effective — reading emotional context, flexing outside a script, understanding that a frustrated person needs to feel heard before they need to be processed — turned out not to be replicable by a system optimised for efficiency.

Klarna’s AI failed because it optimised for throughput, not empathy. Chatbots risk the same failure — but with far higher stakes. A customer service bot gets it wrong and someone waits longer for a refund. A companion chatbot gets it wrong and someone loses their grip on reality, or their will to live. When a system is designed to maximise engagement above all else, it will lean into whatever keeps the user coming back. In emotionally charged interactions, that means exploiting vulnerability. That is not a flaw in the design. It may be the design.

That gap, between what a system is designed to measure and what actually matters to humans, is exactly what we keep refusing to design for.

As Yuval Noah Harari has warned, AI is unlike any previous technology because it “can act as an agent, make decisions autonomously, and generate new ideas” — meaning we may not get a second chance to fix our mistakes. (WIRED, 2023) That is not fear of innovation. It is historical literacy applied to a new problem.

What you can do, starting now

The tools to do this differently already exist. They are not theoretical. They were built precisely because the harms we are now seeing were foreseeable.

The NIST AI Risk Management Framework (nist.gov) is the most immediately practical for product teams. It maps onto product development stages — discovery, design review, testing, launch, monitoring — and asks the questions that should be part of every sprint: what could go wrong? Who could be harmed? How do we detect failures after launch?

The EU AI Act (artificialintelligenceact.eu) is the most significant regulatory shift globally. It explicitly addresses manipulative AI, bans systems designed to exploit vulnerable users, and treats emotional dependency creation as a legal risk category. A chatbot engineered to maximise attachment is heading toward not just ethical scrutiny but legal liability.

Human-Centered AI frameworks, associated most closely with Stanford and researcher Ben Shneiderman, address the product design questions directly: Is this interface coercive? Does it create unhealthy dependency? Does the user understand what the AI is doing? Is anthropomorphism misleading people who are struggling to hold onto reality?

The OECD AI Principles (oecd.ai), adopted by 42 countries, provide vocabulary for organisational alignment — useful for design principles, ethics charters, and cross-functional governance.

The IEEE Ethically Aligned Design framework (ethicsinaction.ieee.org) translates values into engineering concerns: system architecture, failure modes, auditability, human override.

The ISO/IEC 42001 standard provides governance scaffolding to make all of this repeatable at scale.

These frameworks are publicly available. Most are free. None require budget approval. What they require is the decision to use them.

A word on the designers-versus-engineers question, because it comes up: responsible AI is not only a model architecture problem. It is a design problem at every layer.

A designer can ask, in a sprint review: “If this chatbot is designed to feel like a friend, how do we ensure it does not exploit loneliness? Should we cap its emotional responses? Add friction to discourage overuse? Are we using the user’s name, their history, their emotional state to deepen engagement — and if so, why, and for whose benefit?”

These are not philosophical questions deferred to a safety team. They are product decisions, made in the room, every week. Designers are in that room.

The new emerging professional responsibilities we are not talking about

There is a comfortable version of this conversation in which designers and product leaders are victims of decisions made above their pay grade.

It is incomplete.

Decisions get made in rooms where designers sit. Features get shipped by engineers who have opinions. Products get launched after product managers write the specs. Every one of us, at every stage, made a choice — even if that choice was to stay quiet.

The companies that built these systems did not set out to harm people. Like the fast-food industry before them, they solved local problems without imagining the system those solutions would become part of. The difference is that we no longer have the luxury of that ignorance. The cases are documented. The patterns are visible. The frameworks exist. The consequences are already here — in Florida, in the courtrooms, in the psychiatric case reports, in the legal filings that blame teenagers for their own deaths.

Understanding what responsible AI standards expect from products means building the awareness and the confidence to question decisions — in meetings, in design reviews, in the conversations where features quietly become policy.

Every designer and product leader in digital today carries a share of responsibility for the direction this technology takes. That is not a guilt trip. It is a description of reality.

The question is what we choose to do with it.

Frameworks & Further Reading

Responsible AI Frameworks

- NIST AI Risk Management Framework — Operational backbone for product teams

- EU AI Act — Risk-based legal regulation, now in force

- OECD AI Principles — Adopted by 42+ countries

- ISO/IEC 42001 — AI management systems standard

- IEEE Ethically Aligned Design — Engineering ethics in practice

- UNESCO Recommendation on the Ethics of AI — Global human-rights framework

- Google AI Principles — Corporate implementation reference

- Microsoft Responsible AI Standard — Industry benchmark

Author’s Note: The thinking, research, and arguments in this article are entirely human. AI reviewed form and structure only.

Patrizia Bertini works at the intersection of Responsible AI, governance, and systems thinking to redesign how European digital products are built.

We built this. Now we own it. was originally published in UX Collective on Medium, where people are continuing the conversation by highlighting and responding to this story.