Conversational Design based on effective communication.

A lot is changing

Like many other Product Designers in our field, I’ve been in the throes of rapid change on a number of levels due to the advent of AI. Roles are changing and adapting. Innovation and experimentation are rampant. Likely, you’re using AI in a variety of ways, daily even. As designers, our typical mediums of yesterday and how we interact with them are changing on a dramatic scale. Just a few years ago, we were only worried about screens and flows. Now it’s orchestrating agentic workflows across channels. Honestly it is exciting and scary all at the same time.

“Growth and comfort do not coexist.” — Ginni Rometty

Recently my team and I set out to form our own formal philosophy and approach to Conversational Design. I’d like to share the insights and ideas I’ve amassed on the promise of effectively interacting in this medium.

There’s not just one right interface for AI

In this new era of conversational interaction, our potential patterns of interfacing have multiplied. It can comprise of only text or voice — or it might also include some visual aids or graphical prompts. Layer in more intelligence and now we’re talking about an experience that adapts, changing its response form on the fly.

This means we’re now considering everything from the ‘ol standby — GUIs, or Graphical User Interfaces — to Conversational User Interfaces, Adaptive Intelligent Interfaces and even automated portions of workflows and processes to the. We’re now strategically deciding when and where Humans-are-in-the-loop. If it’s a spoken interaction, like an IVR system, we have to consider factors like tone, volume and pace. There’s even more onion layers considering agentic ecosystems and relationships — When does one agent pass ownership to another? How and what should each agent be responsible for in function and communication?

For example, in a multi-agent system where a ‘Travel Agent’ AI might converse with a ‘Weather Agent’ and a ‘Booking Agent’ to build your itinerary, ensuring they can communicate with clarity and share concepts in a balanced exchange is critical. Just like humans need clear handoffs to avoid confusion, these agents will require structured ‘dialogue’ to ensure the user’s original intent isn’t lost in translation between systems.

What’s a CUI good for?

Conversational User Interfaces or CUIs, are great for specific modes of engagement. As Dan Saffer described in his workshop — Designing for AI: New Techniques, CUIs are excellent when the expected or desired outcome is fuzzy — you can conversationally explore, brainstorm or wind your way through to clarity. Asking for a suggestion, for example, might also mean that the AI needs to ask follow up questions to refine context before confidently providing a recommendation. “Help me plan a fun trip this summer” might be followed by “Can you tell me about your budget for the trip?” Or “What’s a good place to grab lunch near me?” could see a response like “Do you have any dietary restrictions or preferences?”.

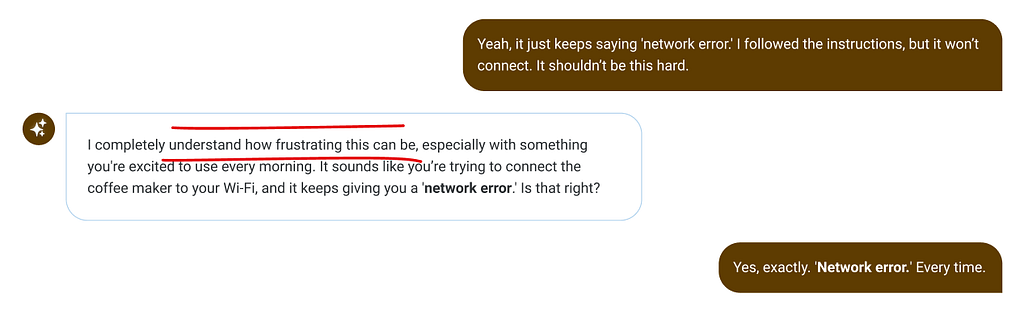

“I can’t connect my smart coffee maker to Wi-Fi” might look like the following:

Imagine an Interior Designer, they may start their process with you by presenting color swatches and looking through sample materials. The desired outcome is alignment. If you think about it, this turn-based exchange aimed at refinement is not dissimilar to a conversation — just with visual and textural aids, body language and spoken words all mixed together. By presenting new options as each choice is made, a result is eventually arrived at. Fuzzy eventually becomes clear.

Are we having a conversation?

One frustration I have within this emergent tech bubble we’re currently trodding through is vernacular — for example, saying something is “conversational” means different things to different folks. Some might hear “conversational” in this context and expect a purely text-based exchange. I tend to consider conversations to really be any exchange between two parties seeking mutual understanding. They’re full of hypotheses, problem solving, brainstorming, testing, translations, metaphors and analogies — all for the sake of disambiguation.

Everything IS a conversation

In the way that I think about our future living alongside AI — no matter what interface medium (chat, Voice, GUIs, etc) we’re using — we’ll be having conversations. I’d posit that traditional app and web experiences have been in a sense, conversations — just with more potential pathways to outcomes presented all at once — flatter — fewer turns effectively due to a more explicitly mapped form of communication. Think about any nested menu within a website or app you engage with. Each selection or choice reveals an additional level of choices. Taking turns to arrive at something more specific.

Multimodal + adaptation ~ human

Looking at this era of AI innovation, we’re already seeing blended examples mixing inputs together. The tools themselves are multimodal-capable like Gemini, Chat GPT and Claude — which are all able to take in anything from text to images to videos as part of the communication. On some levels this is more closely mimicking human processing capabilities — where a conversational exchange might include text or vocal responses one turn and then visual aids or gestures in the next.

Not only do humans communicate with each other through a multimodal approach (spoken language, body language, gestures, etc) — but we adapt as the conversational exchange unfolds. An intelligent, adaptive interface (AUI) mirrors this quite a bit in concept — using words to converse at first, but opting to show something visual as it might convey something more succinctly. Or maybe it’s better to offer a small set of potential response-choices to a question in order to slim down error. What’s interesting to me here is that adaption across communication, while using multiple modes of sharing information, feels humanly familiar.

Conversational Design

Knowing that Conversational Interfaces will continue to be a part, if not a foundation of how we continue to engage and interact with Artificial Intelligences for the foreseeable future, I really wanted to shore up mine and my team’s expertise in Conversational Design specifically.

When it comes to a CUI, I started out wondering what leads to an effective solution. Why, for example, are folks actively accepting and adopting experiences like GPT and Copilot — but adverse towards classic Chatbots? I previously dug into this topic in another article — which focused more on surpassing novelty, building trust and achieving adoption.

In summary — while adoption is achieved through surviving novelty and building trust — it is the ability to hold an effective conversation that can truly lead to creating rapport and ensure a trusting relationship.

Effective Conversational techniques are the way

So what does “Effective Conversation” look like? When you’re face-to-face with another human, you might think about how much we convey through our actual faces — otherwise known as the original interface. We’ve evolved to see and detect all kinds of nonverbal queues from other humans. Unfortunately, nonverbal signals aren’t necessarily available with a digital interaction. (at least until the robots with emotive faces take over) This puts even more importance and dependency on the remaining facets of a digital-based conversation.

Conversations are fluid

How do you know the experience is following you contextually? How can you be sure the intelligence IS intelligent enough to appropriately respond and not just make shit up?

One of the big discrepancies between effective and lackluster conversational experiences is how well the experience conveys and reassures you. It should understand each input and track the evolution of context across exchanges. On one hand, this is about the intelligence being intelligent enough to carry and shape context across a turn-based exchange. It is also necessary for the intelligence to have the knowledge and resources in order to navigate successfully to your desired outcome. You might consider these Conditions of Truth when thinking about an effective conversation. Both subject matter expertise and conversational capability are required.

Conversational communication is imperfect

How would you ever know exactly what to say in conversation to achieve your precise outcome in return?

It’s a very human thing to loosely navigate your way to an outcome via conversation. However, conversationally approaching an outcome is far from the perfect method. I’m certainly guilty of fumbling my way to an outcome via conversation from time to time. Whether you’re speaking with an AI or an Intelligent Human — we’re engaging with a completely enclosed external system or entity. What it can offer, contribute and discern is completely unknown until you begin winding through conversation.

In contrast, the familiar GUIs of our day-to-day have evolved to effectively show you all possible options — organized and structured, all at once (or at least in logical levels). GUIs are often explicit. If you take your time to explore and digest what’s available, you can easily understand the entire system you’re about to engage with.

While conversationally interacting may not be perfect, it is still very possible to positively influence the interaction and outcome by holding an effective conversation.

Holding an effective conversation

(less non-verbal queues)

I want to reiterate that I’m considering conversations to be more than just spoken or text-based exchanges between parties and more broadly a turn-based form of interaction. As Erika Hall frames it in her wonderful book, Conversational Design — every interaction between a person and a product IS a conversation. In this sense, spoken and text-based exchanges do fit within this definition along with so much more conceptually. If you zoom out, you can imagine applying these to agentic systems as well — enabling effective communication between AI agents in a variety of mediums.

Humans are already good at conversations

What can we learn from observing effective human-to-human spoken conversations and communication practices?

We have been holding conversations with each other for a while now as humans and there are some well defined approaches to being effective when communicating with someone else — and I think there’s relevant best practices we can extract for the sake of broader AI application. In this AI context, we do need to subtract out the non-verbal queues as we can’t rely too heavily on seeing a face or body language.

Dialogue

The goal with any conversation is to achieve free-flowing dialogue. I’m personally a big fan of the book — Crucial Conversations — and the methodologies described within. (I highly recommend it if you haven’t read it!) The techniques span quite a bit of territory, mostly focused on navigating heightened conversations and tons of self reflection. However, something discussed as a linchpin intersection of techniques and ideas is that both conversational parties need to feel safe enough to freely contribute to a shared pool of understanding. With safety, open dialogue can be achieved, and then it’s often easier to navigate a tricky conversation.

Safety is rapport

Rapport is something often discussed as needing to be built and then nurtured. It is by definition, a harmonious relationship where dialogue is flowing freely. Crucial Conversations focuses on safety quite a bit, and for a lot of great reasons. Humans tend to not behave the best when we’re not feeling comfy. I know I’ve certainly lashed out when trying to communicate with a poo-poo chatbot experience.

As I discussed earlier, to keep users coming back to a given conversational interface and achieve adoption, you need to deliver expected outcomes consistently while building trust. To me, this presents another area of overlapping ideas. Safety in a conversation reflects how immediately comfortable someone is to participate, while trust is that sustained level of reliable, consistent and respectful communication. Effective communication leverages rapport, establishing a safe exchange of dialogue, which then opens the door to deepening trust.

How have humans been building trust and safety via conversations all this time?

I’ve identified a set of human conversational skills and qualities that could serve as a foundation for an effective AI communicator: active listening, empathy, clarity and a balanced exchange.

Conversational Skills

Active Listening

Active Listening is critical for effective communication. Being able to truly focus on the speaker, validate and summarize their ideas back to them affirms and demonstrates understanding.

While in this digital context and not reliant on non-verbal queues, Active Listening can support safety, rapport, and trust, by leveraging techniques like paraphrasing expressed ideas to ensure and validate understanding. If I were designing and building a Conversational Agent, I’d advocate for Active Listening to be a required, high priority skill set.

Conversational Design Techniques →

- Paraphrasing, summarizing, and validating understanding: Both paraphrasing and summarizing involve restating what was heard in your own words to confirm comprehension and ensure alignment with the user. This practice helps to avoid miscommunication and misunderstandings. To take it further into AI skillsets — I’d want to make sure our intelligence is verifying with the user that the amassed shared understanding is true to intentions.

- Showing Engagement: Restating the speaker’s context, either by paraphrasing or summarizing, demonstrates that the listener is actively engaged in the conversation, attentive, and shows respect. This active reflection also helps build trust.

Active Listening is particularly advantageous when applied in troubleshooting scenarios where restating the problem can confirm accuracy, for example: “So, the application freezes every time the ‘save’ button is clicked. Is that correct?”

In short, research suggests paraphrasing and summarizing as dual techniques for reflecting content back to a speaker, ensuring that the message has been accurately absorbed and understood by the listener. The emphasis here is that these dual techniques aim for confirmation of the message in the listener’s own words. This in-conversation validation could easily translate into a usable form of conversational performance metrics, indicating how well the intelligence is truly understanding the user and their needs.

Anthropomorphism & empathy

Commonly denoted from a variety of frameworks, empathy is another key communication tactic we should shepherd into modern conversational design approaches. In any conversational situation, acknowledging observed emotional impacts and validating someone’s experiences can embolden safety and build rapport. As described in Crucial Conversations, unattended emotional impacts can be a distraction or deterrent from the true goal.

Layering in empathetic skills can easily lead to a more human-like AI. Interestingly, my research suggests that an AI’s anthropomorphic characteristics (human qualities) only positively influence the interaction quality if the experience is simultaneously able to show empathy. A distinction I’d like to make here is that I’m not just simply saying — make it more human. I’m suggesting ideas that can enable effective conversation which happen to overlap with some human qualities. And it makes sense — we’re helping an artificial intelligence communicate better WITH US.

Conversational Design Techniques →

- Acknowledge emotions and validate experiences: Thinking about being empathetic in this context means weaving in small but meaningful acknowledgments of user frustration across the conversation, if detected. This is such a fundamental conversational skill. Effective communicators explicitly show understanding of a user’s frustration or concerns by using empathetic phrases, such as “I understand how frustrating this must be” or “I’m sorry you’re having this trouble”. It is crucial to validate their experience by letting them know their feelings are completely understandable given the context of the situation

- Tuned tone and pace: Being mindful on progressively calibrating tone and pace throughout the conversation will better support the user.

- Present as a supportive advocate: Let them know you’re there to help and that they’re valued, offering encouragement and support.

While AI cannot truly feel, an effective AI communicator would be skilled at empathy-driven candor, acknowledging the user and validating their experience. This is vital for overcoming an impersonal and robotic feeling interaction.

Clarity

This is as much about clarity as it is about knowing your audience. Tuning towards the users of the platform can help them have smoother experience and likely achieve their goal quicker. Even better if things can adapt and shift in respect to changing levels of users’ expertise across topics.

Conversational Design Techniques →

- Simple, accessible language: Avoid technical jargon. When technical terms are necessary, explain them using everyday metaphors and visuals.

- Set clear expectations: Outline the next steps, timelines, and who will be responsible for follow-up actions.

- Provide visual aids: Utilize screenshots, diagrams, or short video tutorials when necessary, especially for visual learners. Point at things visually to be as clear as possible.

- Confirm understanding: After giving instructions, check to ensure they comprehend what needs to be done. If uncertain, ask them to explain the next steps or walk you through the process.

Balanced Exchange

And finally, to ensure a positive and productive user experience in AI conversations, we must prioritize reducing friction and promoting clarity.

The following three techniques are essential for creating effective and user-friendly conversational interfaces:

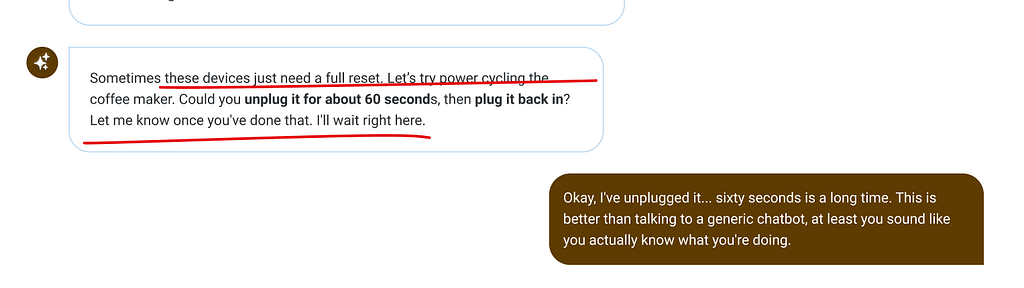

Conversational Design Techniques →

- Cognitively mindful conversation: Engage in conversation “one thought at a time,” to reduce misunderstandings and increase engagement by focusing on a single point before moving on, with intentional pausing for processing. Cognitive research shows that simplifying information and structuring interactions reduces user “mental demand” and “frustration,” as high cognitive load hinders effective interaction.

- Structured Instructions: Provide step-by-step guidance, breaking down complex tasks into smaller, manageable chunks, and confirming understanding after each step. This is another area where metaphors and visual aids can be leveraged.

- Clear human handoffs: Provide obvious and transparent paths to escalate to live agents or humans for complex issues. This addresses fears of impersonal interactions and getting trapped in automated loops, ensuring customers feel supported. Help users avoid dead-ends.

Summing up

Ultimately there’s a wealth of conversational techniques from humans communicating better and worse over the years. These four essential human conversational skills: Active Listening, Empathy, Clarity, and Balancing the Exchange are what have stood out to me as I’ve explored the space in search of shaping effective AI communication. As we shift deeper into this new technological space, it will be critical to continue examining what human conversational skills we should leverage and when. And maybe more importantly, when not to.

Achieving effective AI-human dialogue depends on the AI’s ability to listen, confirm understanding, simplify technical concepts, and ensure clarity. Just as importantly, the AI should show empathy by acknowledging user frustration and adopting a patient, collaborative approach. I am excited to leverage these perspectives in our approach to Conversational Design as we lay the groundwork for future agentic capabilities. I’d love to hear about your approaches in establishing your own Conversational Design mantra!

The art of conversational flow was originally published in UX Collective on Medium, where people are continuing the conversation by highlighting and responding to this story.