Why design taste became the real constraint in the AI era.

The interface sameness problem

Open almost any new SaaS product today and the interface feels familiar before the product itself becomes clear. Rounded cards, neutral sans-serif typography, soft gradients, spacious layouts, and a persistent AI assistant in the corner have become the default grammar of modern digital products.

Across industries, the patterns repeat:

- Chat-first layouts replacing structured navigation

- Bottom-right AI assistants embedded in the interface

- Reduced dashboard density with increased whitespace

- Gradient hero sections signaling “AI-native” identity

- Simplified onboarding flows optimized for rapid activation

These products may solve entirely different problems, yet increasingly they look like variations of the same interface system. This convergence is not merely trend imitation. It reflects a deeper structural shift in how products are built.

Generation has become abundant

Over the last few years, generative AI has dramatically compressed the distance between intention and execution. Tools such as v0, Cursor, Claude Code, Runway, and Midjourney can produce interfaces, prototypes, motion concepts, and even early product directions in seconds. What once required extended exploration can now emerge from a prompt almost instantly. This changes the economics of design. The constraint is no longer the ability to produce artifacts. Screens, layouts, and visual variations are easy to generate. Production is no longer scarce. AI did not democratize design judgment. It democratized output.

The illusion of design as output

They exist in the continuity between decisions, in how one state transitions to another, in how interactions behave under edge conditions, and in how the experience holds together over time. Trust does not form from individual screens. It forms from consistency across moments.

Strong products depend on:

- Stable behavior across states

- Clear and predictable interaction logic

- Thoughtful escalation paths

- Coherent tone and feedback

- Alignment between visual identity and lived experience

Generative systems can produce polished snapshots. Products operate as evolving environments. As artifact creation becomes effortless, value shifts upward. The strategic layer above generation becomes more important than ever. The central question changes:

Not “Can we make this?”

But “Should this exist within our system?”

That shift marks the difference between output and authorship.

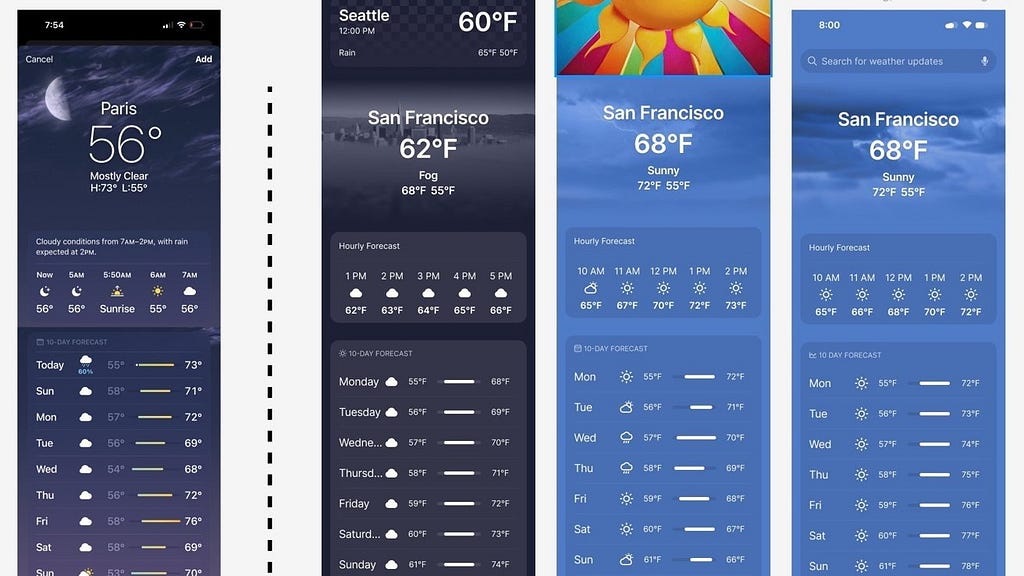

Evidence of probabilistic convergence: the Apple weather pattern

This tension is already visible across much of the startup ecosystem. Browse enough AI-generated landing pages or interfaces assembled through generative workflows and a pattern becomes clear: individual screens may look polished, yet the overall experience often feels interchangeable. The issue is rarely technical competence. It is conceptual ownership. Generative systems trained on vast distributions of existing interfaces tend to optimize toward statistically reinforced versions of “good UI.” Because they learn from large datasets that encode widely accepted design patterns, their outputs gravitate toward what appears most probable, familiar, and usable.

Early experiments with AI-generated mobile interfaces frequently produced layouts resembling highly cited reference products such as the Apple weather app. This was not intentional imitation. It was convergence around patterns strongly represented in training data and widely recognized as effective.

The Weather app reflects many qualities that dominate usability benchmarks:

- Clean state-based structure

- Minimal visual noise

- Clear hierarchy

- Well-defined information architecture

- Reduced cognitive load

These traits appear repeatedly in design systems, case studies, and interface libraries. As a result, they become statistically reinforced signals of quality. Generative models, optimizing for coherence and usability, often reproduce similar structural logic. This is not copying in intent. It is convergence in probability space. When many teams rely on similar generative tools trained on overlapping datasets, the range of likely outputs narrows. Interfaces begin to resemble one another not because designers lack skill, but because the underlying probability distribution favors familiar configurations. Competence becomes common. Distinction becomes harder.

The false idea that prototyping is dead

This is where much of the current conversation around AI and product design becomes misleading. A common claim circulating inside AI-first product teams is that prototyping is becoming obsolete because AI can already generate fully formed interfaces instantly. That conclusion only makes sense if prototyping is reduced to screen production, which it never truly was. Prototyping has always been less about producing polished visuals than about exposing uncertainty inside a system before it hardens into product reality. It is where interaction models are tested against ambiguity, where behavioral contradictions emerge, and where teams discover whether an experience can sustain coherence beyond a single idealized state.

What AI has removed is not prototyping itself, but the friction previously required to create artifacts that looked finished. This distinction is critical because many contemporary “prototypes” are no longer exploratory tools; they are polished snapshots of isolated states. They communicate resolution without necessarily containing evidence that the underlying experience can survive complexity, scale, or time. As a result, prototyping has not disappeared so much as migrated upward. The center of gravity has shifted away from screen production and toward system definition.

Prototyping has moved into design taste

This is the deeper structural transition unfolding beneath the current AI wave. As generation becomes effectively infinite, design value migrates away from execution and toward judgment. Not judgment in the purely aesthetic sense, but judgment as governance: the ability to define what must remain consistent across an experience, what can vary without breaking coherence, and what should never exist at all even if it appears locally attractive. In other words, design taste becomes less about visual preference and more about maintaining system integrity under conditions of extreme generative abundance.

The reason this matters is that generative systems naturally converge toward familiarity. When AI models are trained on similar datasets and optimized around similar heuristics of usability and modernity, the output space compresses. The same interaction structures, typographic systems, onboarding patterns, and compositional logic begin to repeat across unrelated products and industries. This convergence is already visible across large portions of AI-generated SaaS design, where many products increasingly share the same visual grammar regardless of brand, audience, or purpose. The issue is not that these interfaces are poorly designed. In many cases they are highly competent. The problem is that competence alone no longer creates distinction.

This is also why a counter-movement is quietly emerging across contemporary design practice. Rather than pursuing further visual refinement, many designers are deliberately reintroducing irregularity, texture, asymmetry, editorial density, and friction into digital experiences. This is not nostalgia for pre-digital aesthetics. It is an attempt to restore authorship inside an environment where visual fluency has become automated. As generative systems standardize polish, differentiation increasingly depends on visible intentionality.

Constraint as strategy: Airbnb

Some companies are responding by strengthening system-level authorship rather than relying on generative uniformity.

Airbnb demonstrates how constraint can function as strategy. Rather than treating visual design as surface styling, Airbnb’s system integrates typography, motion, photography, illustration, onboarding flows, and category structure into a coherent narrative logic. Major updates, including category-based exploration and interface redesigns, were introduced not as isolated feature changes but as extensions of a broader experiential framework. The result is recognizability at the behavioral level. Airbnb’s differentiation does not depend on novelty in individual screens. It depends on maintaining coherence across the entire system. That coherence reflects deliberate constraint, a strong design point of view applied consistently.

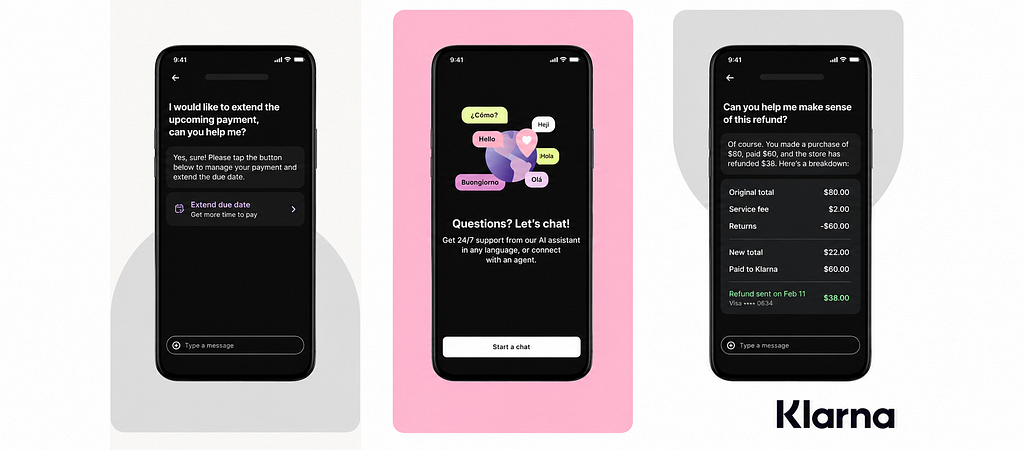

When output optimization breaks coherence: Klarna

The phrase “AI slop” is often used casually to describe low-quality generative output, but in practice it points to something more structural: the collapse of context-specific decision-making into statistically safe defaults. Generative systems optimize toward familiarity because familiarity is probabilistically reliable. Neutral typography, soft gradients, rounded containers, spacious layouts, standardized hero sections, and predictable call-to-action structures are not inherently flawed choices. They simply become interchangeable when repeated endlessly across unrelated contexts. At that point, polish ceases to communicate intentionality and instead begins to signal the absence of a clear point of view.

The pattern extends well beyond interface design.

In 2024, Klarna publicly emphasized how its OpenAI-powered assistant was handling work equivalent to hundreds of customer support agents. The system delivered impressive efficiency gains, faster responses, reduced operational costs, and increased automation coverage. However, the company later adjusted its approach after recognizing quality tradeoffs.

What can break in highly optimized generative systems includes:

- Tone consistency across interactions

- Edge-case handling beyond common scenarios

- Escalation logic for complex situations

- Trust perception in emotionally sensitive contexts

Automation can optimize response speed. But users evaluate experience holistically.

Trust depends on coherence, not just output performance. When systems lack strong governance over escalation and tone, efficiency alone does not guarantee satisfaction.

This reinforces a broader principle: generation is powerful, but without design taste guiding constraints, output does not automatically translate into durable experience quality.

The system problem: design lives above generation

This is why the future role of design is changing so fundamentally. Historically, much of digital product design revolved around reducing the friction of production. Designers translated strategy into screens, systems, and interaction models because creating those artifacts required specialized labor. AI dramatically lowers that production barrier. But once execution becomes abundant, scarcity moves upward. The new constraint is no longer the ability to produce possibilities. It is the ability to select, constrain, and maintain coherence among them.

At the bottom of the stack sits generation: models, tools, and automation systems capable of producing infinite variations quickly and at scale. Above that sits design taste: the layer where constraints are defined, coherence is enforced, and decisions about what should and should not exist are made. Above that sits culture: the narrative layer that shapes identity, meaning, and direction before any interface is created at all. Most generative systems operate almost entirely at the generation layer. That is why they feel so powerful while simultaneously producing outputs that increasingly resemble one another. The technology compresses production. It does not automatically create authorship.

Why design taste becomes the real constraint

AI systems are optimized for expansion. Design operates through selection. Every strong product is ultimately defined as much by what it refuses to become as by what it includes. This is the layer generative systems do not resolve automatically because statistical optimization cannot independently produce authorship, narrative direction, or durable identity. Those qualities emerge from constraint. They emerge from deciding which patterns deserve persistence and which should be rejected even when they appear superficially effective.

As generation becomes effectively infinite, execution itself loses strategic value. When anyone can generate polished interfaces instantly, polish stops functioning as differentiation. The competitive advantage shifts upward toward system governance: the ability to maintain a coherent point of view while products evolve across platforms, contexts, and time. In this environment, design taste becomes less about aesthetics and more about preserving conceptual integrity under conditions of abundance.

UX is quietly becoming narrative structure

As products become increasingly adaptive and AI-driven, interfaces are no longer static compositions that remain fixed after launch. They increasingly behave as evolving systems shaped by behavior, context, memory, and personalization over time. Under those conditions, UX design starts to resemble narrative structure more than static visual composition. The central question is no longer whether a single interface state appears polished in isolation, but whether the system continues to feel coherent as it changes. That requires governance. It requires continuity. It requires design taste operating across time rather than merely across screens.

The future designer is therefore likely to function less as a screen-maker and more as a systems editor: someone responsible for defining the logic, boundaries, memory, and behavioral consistency that allow adaptive products to remain intelligible as they evolve. In the AI era, the challenge is no longer producing interfaces. It is maintaining meaning across systems capable of generating endless variation.

A counterpoint: AI can expand exploration

It is worth acknowledging a reasonable counterargument. Good teams using AI may move faster and explore more design directions than before. Generative tools can expand creative possibility, enabling rapid iteration and broader experimentation. This is true. However, exploration alone does not create identity. Without strong design taste guiding selection and constraint, increased output can still result in convergence. The differentiator is not how much is generated, but what is chosen, refined, and maintained over time.

Differentiation through coherence

AI has made creation abundant. That abundance changes what becomes valuable. When anyone can generate polished interfaces instantly, polish itself stops functioning as differentiation. Coherence becomes differentiation. Constraint becomes differentiation. Point of view becomes differentiation.

Design taste becomes the real constraint.

The future advantage may increasingly depend not on the ability to generate more possibilities, but on the ability to define strong constraints, maintain narrative continuity, and preserve system integrity while using tools that can produce nearly infinite variations.

In the AI era, value may lie less in output volume and more in authorship.

AI made everyone a creator, not a designer was originally published in UX Collective on Medium, where people are continuing the conversation by highlighting and responding to this story.